Happy Horse 1.0 AI Video Generator#1 Arena-Ranked Text & Image to Video

Use Happy Horse 1.0 in Topview — the top-ranked AI video model on Artificial Analysis Arena. Generate cinematic 1080p video with synchronized audio, multi-shot storytelling, and 7-language lip sync from text or image prompts. Try free.

[The video begins with a wide cinematic shot of meteorites raining down on a futuristic city skyline [image2]. It quickly cuts to a low-angle medium shot of a fighter standing in the ruins. The camera uses a low-angle perspective to emphasize power, with fast-paced cuts and a deep focus on the falling fireballs in the background.] [A high-stakes, high-intensity duel between a fighter[image1] and a shadowy dark knight amidst a ruined city. The battle is characterized by rapid sword clashing that emits sparks, powerful lightning strikes that illuminate the dark environment, and heavy impacts that cause the ground to shatter and release clouds of dust.] [Professional camera shooting], [Professional photography pro style, Cinematic fantasy action], [Epic rhythmic orchestral music with industrial beats and intense combat sound effects], [Lightning and electrical magic effects, high-fidelity particle simulations, sparks from sword clashes, motion blur, and cinematic speed ramping]

Happy Horse 1.0 Output Samples

Real videos generated by Happy Horse 1.0 — with synchronized audio in a single pass.

“A child posing for photos — candid moments captured with natural lighting and genuine expressions.”

“A rubber band ball bounces down a staircase, each impact full of uncertainty. The ball suddenly veers left into a bathroom, ricochets off the tiles repeatedly, and finally lands in the toilet. Nobody picks it up.”

TL;DR

Happy Horse 1.0 is the #1 ranked AI video generation model (April 2026) with 15B parameters, joint video+audio output, 7-language lip sync, and open-source availability. Generate 1080p video in ~38 seconds. Try it free on Topview alongside all leading AI video models.

What Happy Horse 1.0 Does Best

Happy Horse 1.0 leads the Artificial Analysis Arena for both text-to-video and image-to-video. These use cases show where its strengths matter most for real production workflows.

Multi-Shot Storytelling

Generate coherent multi-shot sequences with persistent character identity, scene transitions, and narrative flow that single-shot models cannot match.

"Character-led lifestyle moment featuring a stylish subject in a modern environment. Use natural body movement, soft fashion-forward lighting, light fabric motion, and a smooth handheld or tracking camera that keeps the subject expressive, polished, and brand-friendly."

High-Fidelity Visual Quality

Deliver premium visual output with sharp surface detail, accurate reflections, smooth motion, and cinematic lighting that holds up in professional production workflows.

"Premium product commercial with a hero item centered in a dark studio setup. Use a smooth push-in, subtle orbit movement, glossy reflections, controlled highlight rolloff, and a clean luxury ad rhythm that keeps the product sharp and dominant throughout the shot."

Joint Video + Audio Generation

Produce video with synchronized dialogue, ambient sounds, and Foley effects in a single forward pass, eliminating the need for separate audio post-production.

"Short cinematic brand sequence with strong atmosphere, layered depth, and purposeful movement through the scene. Emphasize moody lighting, story-driven framing, steady forward momentum, and a premium commercial tone that feels dramatic without losing clarity."

Fast Cinematic Production

Generate 1080p video in ~38 seconds on H100 GPU with only 8 denoising steps via DMD-2 distillation, 30% faster than comparable models.

"Stylized concept clip with exaggerated art direction, strong visual contrast, and playful cinematic motion. Keep the world design cohesive while using a clean tracking move, distinctive textures, and an imaginative tone that feels crafted for a concept teaser or social hook."

What Is Happy Horse 1.0?

Happy Horse 1.0 is a 15-billion-parameter open-source AI video generation model that tops the Artificial Analysis Video Arena leaderboard for both text-to-video (Elo 1,341) and image-to-video (Elo 1,402). It uses a unified 40-layer self-attention Transformer architecture to jointly generate video and audio from text or image prompts in a single pipeline. On Topview, you can test Happy Horse 1.0 alongside other leading models like Seedance 2.0, Kling 3.0, and Veo 3.2, compare outputs side by side, and ship the best result for your campaign without committing to a single model.

Unified Video + Audio Architecture

A single self-attention Transformer handles text, image, video, and audio tokens in one sequence, producing synchronized multimodal output without cross-attention modules.

#1 Arena-Ranked Quality

Achieved Elo 1,341 (T2V) and 1,402 (I2V) on Artificial Analysis, outperforming Seedance 2.0, Kling 3.0, and PixVerse V6 in blind human preference tests with 3,000+ votes.

Open-Source with Commercial Rights

Fully open-source with base models, distilled models, super-resolution modules, and inference code available for custom fine-tuning and commercial deployment.

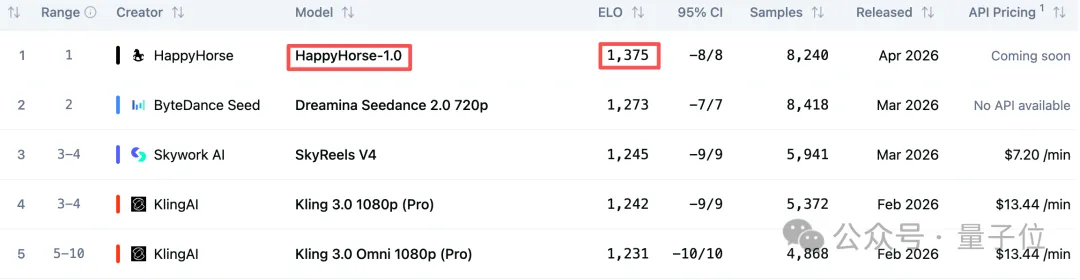

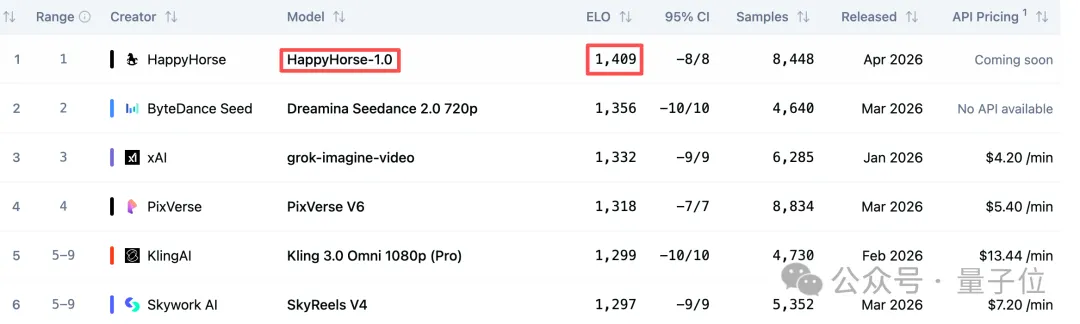

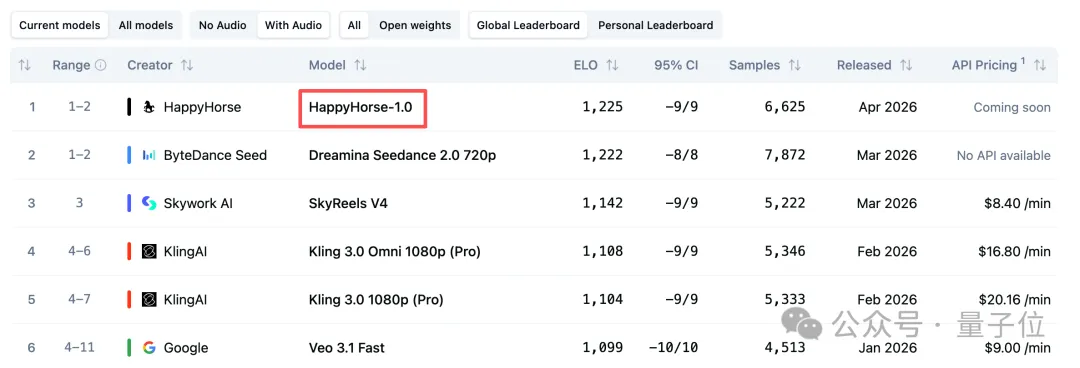

Happy Horse 1.0 Arena Rankings

#1 across all categories on the Artificial Analysis Video Arena, based on 3,000+ blind human preference tests.

Text-to-Video

100+ Elo points ahead of Seedance 2.0 (#2 at 1,273). The gap between #2 and #10 is only ~50 points — Happy Horse's lead is a tier above the field.

Image-to-Video

All-time record Elo score on the Image-to-Video Arena, surpassing every closed-source and open-source model tested.

With Audio

First place in joint video + audio generation, outperforming Google Veo 3.1 and ByteDance Seedance 2.0.

Source: Artificial Analysis Video Arena, April 2026. Rankings based on blind human preference tests where users vote without knowing which model generated each video.

Happy Horse 1.0 Blind Test Results

Real comparisons from the Artificial Analysis Video Arena. Users vote without knowing which model generated each video.

“A retro, 70s Urban Grit style scene shows a lone astronaut wandering through a desolate Martian landscape with a blood-red sky.”

Happy Horse captures the full-body walking cycle with realistic foot contact and cinematic wide shot, while the competitor resorts to a static close-up.

“A politician in her early 50s speaks at a press conference, with flashing cameras and reporters typing furiously.”

Happy Horse delivers dynamic multi-person motion with camera flashes, while the competitor shows a static wide shot lacking the energy described in the prompt.

“A craftsman focused at work in a quiet workshop, camera slowly pulling in to reveal fine detail on the subject's face.”

Happy Horse preserves realistic facial textures on close-up, while the competitor produces overly smooth skin that breaks the realism.

What the AI Community Is Saying

Industry leaders and media are taking notice of Happy Horse 1.0's unprecedented arena performance.

"happy horse is insanely happy."

"The gap is staggering — a tier-breaking lead of 100+ Elo points. From #2 to #10, the total spread is only about 50 points."

"Happy Horse First Output. This model beats Seedance 2 on Artificial Analysis..."

Who Built Happy Horse 1.0?

Built by the Future Life Lab of Taotian Group (Alibaba), led by the architect of Kuaishou's Kling models.

Zhang Di

Head of Future Life Lab, Taotian Group (Alibaba)

Zhang Di is the technical lead behind Happy Horse 1.0. He previously served as Vice President of Technology at Kuaishou, where he architected the Kling 1.0 and 2.0 video generation models. Before that, he spent a decade at Alibaba as Senior Technical Expert leading large-scale ML infrastructure. He holds a Master's degree from Shanghai Jiao Tong University.

Career Timeline

Senior Technical Expert, Alibaba

Led large-scale data and ML engineering for Alibaba Mama (ad platform)

VP of Technology, Kuaishou

Architected Kling 1.0 and 2.0 video generation models

Head of Future Life Lab, Taotian Group

Leading Happy Horse 1.0 development at Alibaba

Happy Horse 1.0 is developed by the Future Life Lab at Taotian Group, part of the Alibaba ecosystem. The team focuses on next-generation multimodal AI for content creation and commerce.

Happy Horse 1.0: Key Takeaways

- Happy Horse 1.0 is a 15-billion-parameter open-source AI video model that ranks #1 on the Artificial Analysis Video Arena for both text-to-video (Elo 1,341) and image-to-video (Elo 1,402) as of April 2026.

- It uses a unified 40-layer self-attention Transformer with a sandwich architecture to jointly generate video and audio in a single forward pass, without cross-attention modules.

- The model supports phoneme-level lip sync in 7 languages (English, Mandarin, Cantonese, Japanese, Korean, German, French) and generates synchronized dialogue, ambient sounds, and Foley effects natively.

- At 1080p resolution, Happy Horse 1.0 renders video in approximately 38 seconds on H100 GPU using 8-step DMD-2 distilled inference — 30% faster than Seedance 1.5 Pro or Kling 2.1.

- The model is fully open-source with commercial rights, including base models, distilled models, super-resolution modules, and inference code for custom fine-tuning.

- On Topview, users can test Happy Horse 1.0 alongside Seedance 2.0, Kling 3.0, Veo 3.2, and other top models in a single workspace with side-by-side comparison and team collaboration.

How to Prompt Happy Horse 1.0 for Better Results

Happy Horse 1.0 responds well to structured prompts that specify duration, motion, camera work, and audio cues. Here's how to get more consistent output.

Specify duration upfront

Start your prompt with the target length (e.g., "8s duration:") so the model can pace the action correctly.

Describe motion in sequence

Break the action into a timeline: what happens first, what follows, how it ends. The model handles multi-beat sequences well.

Include audio direction

Since Happy Horse generates audio natively, add audio cues like "ambient forest sounds," "dialogue in English," or "footsteps on gravel" to get synchronized output.

Use camera language

Terms like tracking shot, orbit, push-in, aerial view, and close-up give the model specific shot direction instead of vague requests.

Leverage character references

For multi-shot stories, reference characters by label (@Image1, @Image2) to maintain identity consistency across scenes.

Match aspect ratio to platform

Set 16:9 for YouTube/landing pages, 9:16 for TikTok/Reels, 1:1 for social feeds before generating.

Basic vs Happy Horse-Ready Prompt

| Element | Basic Prompt | Happy Horse-Ready |

|---|---|---|

| Duration | (none) | "8s duration:" prefix |

| Motion | make it move | "horse gallops left to right, slows to a trot, turns to face camera" |

| Audio | (none) | "galloping hooves on dirt, wind, distant birds" |

| Camera | cinematic | "low-angle tracking shot, smooth lateral pan" |

| Characters | two people | "@Image1 and @Image2 interact, maintaining consistent appearance" |

| Action count | lots happening | "one primary action per 5s segment" |

| Platform | make a video | "9:16 vertical, optimized for TikTok" |

| Phrasing | don't make it blurry | "sharp focus, crisp detail, high-definition textures" |

How to Use Happy Horse 1.0 in Topview (3 Steps)

Enter a prompt

Describe the video you want, including duration, motion, and audio cues.

Generate video

Click generate and Happy Horse 1.0 creates your video with synchronized audio.

Download the video

Export a clean MP4 with audio when you're ready.

Happy Horse 1.0 Core Capabilities

Happy Horse 1.0 combines video and audio generation in a single architecture, delivering capabilities that most models require separate pipelines to achieve.

Joint Video + Audio Synthesis

Generate video with dialogue, ambient sounds, and Foley effects in one forward pass, no separate audio model needed.

Multilingual Lip Sync (7 Languages)

Phoneme-level lip synchronization in English, Mandarin, Cantonese, Japanese, Korean, German, and French with ultra-low word error rate.

Native 1080p at 38s

Render 1080p video in ~38 seconds on H100 with 8-step DMD-2 distilled inference, 30% faster than Seedance 1.5 Pro or Kling 2.1.

Multi-Shot Storytelling

Produce coherent multi-shot sequences with persistent character identity and smooth scene transitions, unlike single-shot models.

15B Parameter Transformer

40-layer unified self-attention architecture with sandwich design: modality-specific layers at start/end, 32 shared layers in the middle.

Open Source + Commercial License

Base model, distilled model, super-resolution module, and inference code all available for fine-tuning and commercial use.

Happy Horse 1.0 Technical Specifications

Happy Horse 1.0 vs Other AI Video Models

Happy Horse 1.0 leads the Artificial Analysis Arena. Here's how it compares to the top AI video models across key metrics.

| Metric | Happy Horse 1.0#1 Ranked | Seedance 2.0 | Kling 3.0 | Veo 3.2 | Sora 2 | Wan 2.7 |

|---|---|---|---|---|---|---|

| Arena Rank (T2V) | #1 (Elo 1,341) | #2 (Elo 1,273) | #4 (Elo 1,241) | N/A | N/A | N/A |

| Arena Rank (I2V) | #1 (Elo 1,402) | #2 (Elo 1,355) | #5 (Elo 1,297) | N/A | N/A | N/A |

| Max Duration | 10s | 15s | 25s | 10s | 25s | 15s |

| Resolution | 1080p | 1080p | 4K/60fps | 1080p | 1080p | 1080p |

| Native Audio | Yes (joint) | Yes | Yes | Yes | No | No |

| Lip Sync Langs | 7 | 8+ | Limited | Limited | No | No |

| Parameters | 15B | Undisclosed | Undisclosed | Undisclosed | Undisclosed | 14B |

| Open Source | Yes | No | No | No | No | Yes |

| Best At | Multi-modal joint gen | Multi-input flexibility | Long high-spec shots | Audio-rich realism | Prompt-led cinema | Reference workflows |

Happy Horse 1.0 in Action

See how Happy Horse 1.0 performs in real-world tests and comparisons with other leading AI video models.

Happy Horse 1.0 Quality Review

A detailed look at Happy Horse 1.0's motion quality, facial expressions, and cinematic output.

Happy Horse 1.0 Speed Test

Testing generation speed — about 100 seconds for an 8-second image-to-video clip.

AI Video Model Comparison 2026

Side-by-side comparison with Seedance 2.0, Kling 3.0, and other leading models.

Why Use Happy Horse 1.0 on Topview

Topview gives you Happy Horse 1.0 alongside every other top model in one workspace, so you can find the best output for each project without switching tools.

All-in-One Model Access

Test Happy Horse 1.0 alongside Veo, Sora, Kling, Seedance, and other top models in one Board.

Side-by-Side Comparison

Generate the same prompt across multiple models and compare outputs to find the best fit for your campaign.

Faster Production

Go from prompt to ad-ready video without switching between tools or manual audio syncing.

Team Collaboration

Share outputs, leave comments, and align on the best variation with teammates.

Marketing Workflow Integration

Use Happy Horse outputs for product ads, hero visuals, social content, and landing-page media in one place.

Single Subscription

Access Happy Horse 1.0 and all other supported models under one Topview plan instead of juggling separate subscriptions.

Start Creating with Happy Horse 1.0

Generate #1 arena-ranked AI video with joint audio, 7-language lip sync, and multi-shot storytelling. Try Happy Horse 1.0 free on Topview.

#1 Arena-Ranked · Joint Video + Audio · 7-Language Lip Sync · Open Source